Image generation AIStable Diffusion” is an AI that outputs an image according to the text just by inputting a text such as “a bear playing in the forest” or “a person eating ice cream”. Such Stable Diffusion has a mode “img2img” that can improve the accuracy of the output image by inputting the “source image” along with the text.A software engineer and photographer explains how to use img2img to generate high-quality illustrations from simple rough images.Andy Salernoexplains Mr.

4.2 Gigabytes, or: How to Draw Anything

https://andys.page/posts/how-to-draw/

If you give Stable Diffusion an instruction such as “a bear playing in the forest”, it will output an image that is far from the image, such as “The composition is not as expected” or “Winter forest is better than summer forest”. often In order to make the output image closer to the image, it would be nice to give detailed instructions, but the person who gives the instructions is not a human but an AI, soInstructions optimized for AIshould be given. Stable Diffusion has a function called “img2img”, and by giving a reference image, it is possible to generate an image as intended even with some rough instructions. You can check the image actually generated using “img2img” in the following article.

A site where anyone can try ‘img2img’ mode that automatically generates pictures and illustrations as desired with just simple drawing and keywords with ‘Stable Diffusion’ – GIGAZINE

Mr. Salerno explains the procedure to create an “ illustration of a spaceship flying over the ruined Seattle ” from a simple rough using Stable Diffusion’s “ img2img ”.

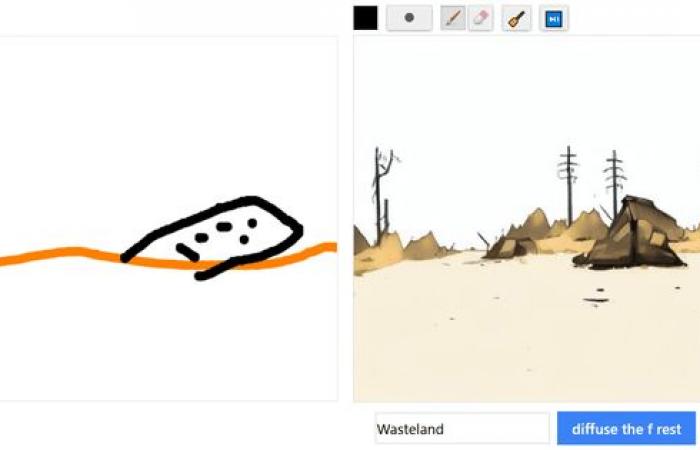

First, draw the background.

In addition, I add hand-drawn rough sketches of the panorama, the ground, the cityscape, and the mountains.

Designate a hand-drawn rough as a reference image and submit it to Stable Diffusion “Digital fantasy painting of the cityscape of Seattle. Vivid autumn trees in the whole view. The Space Needle can be seen. Mt. Rainier in the background. High definition. (Digital fantasy painting of The Seattle city skyline. Vibrant fall trees in the foreground. Space Needle visible. Mount Rainier in background. At this stage, the trees and streets are solidly painted. The value of “strength” that can specify the amount of change from the reference image is set to 0.8.

Designate the image generated by Stable Diffusion above as a reference image and again Stable Diffusion “Digital matte painting. Very detailed. Ruined town. Post-apocalyptic collapsed building. SF. City of Seattle. Golden hour at dusk. (Digital Matte painting. Hyper detailed. City in ruins. Post-apocalyptic, crumbling buildings. Science fiction. Seattle skyline. Golden hour, dusk. Beautiful sky. At sunset. High quality digital art. Hyper realistic.)” Below is the image output. The trees in the whole view have been removed, and the cityscape of Seattle, which has become a ruin, is drawn. The “strength” at this time is 0.8.

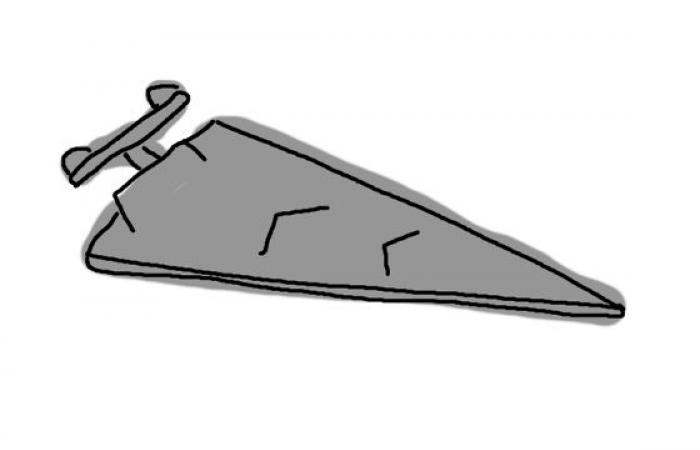

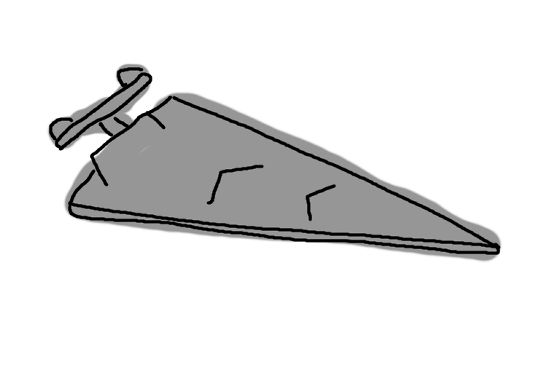

Next, draw a rough sketch of the spaceship floating in the sky.

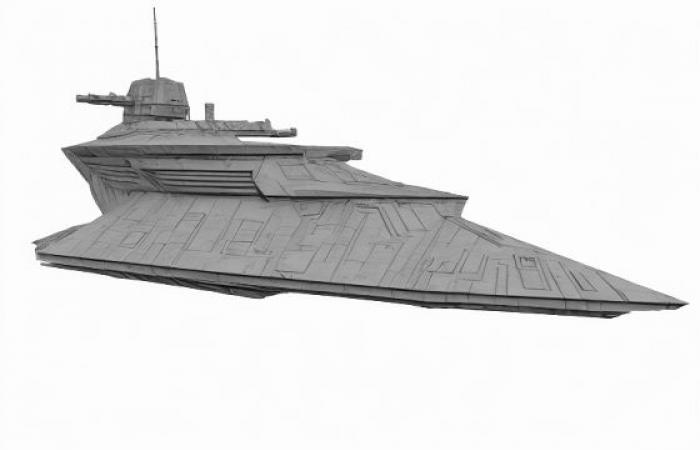

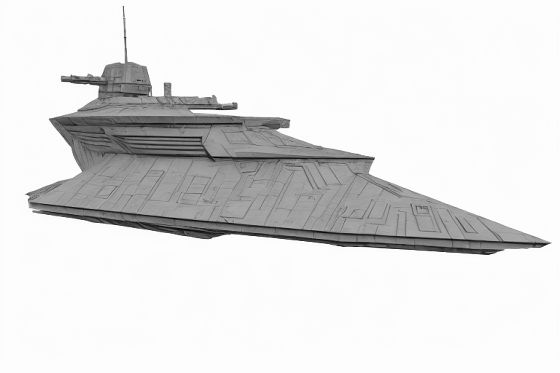

Designating a rough spaceship as a reference image, “Digital fantasy science fiction painting of a Star Wars Imperial Class Star Destroyer. High definition. White background.” The following is the result of giving the instruction “detailed, white background.)” with “strength” 0.8. A spaceship with a Star Destroyer-like atmosphere was output.

Below is the image of the output spacecraft placed directly on the image of Seattle. Only the spaceship has a different drawing style, so the atmosphere is broken.

Therefore, Mr. Salerno repeated the output of both so that the atmosphere of “Image of Seattle” and “Image of spaceship” matched. Below is a superimposition of the “images with just the right atmosphere” obtained after multiple trials.

Next, draw a rough bird like the following …

“Digital matte painting. Very detailed. Birds flying towards the horizon. Golden hour at dusk. Beautiful sky at sunset. High quality digital art. Very realistic.” (Digital Matte painting. Hyper detailed. Brds fly into the horizon. Golden hour, dusk. Beautiful sky at sunset. High quality digital art. Hyper realistic.)” with “strength” 0.75, and during the flight as follows generated an image of a bird of

Below is an image of a superimposed bird image. Pretty close to ideal.

Finally, I designated the above image as a reference image to make the whole thing blend in. “Digital matte painting. Very detailed. Ruined town. Post-apocalyptic collapsed building. Sci-fi. City of Seattle. Star Wars. Imperial Class Star Destroyer Birds flying in the distance Golden hour at dusk Beautiful sky at sunset High quality digital art Very realistic (Digital Matte painting. Hyper detailed. City in ruins. Post- Science fiction. Seattle skyline. Star Wars Imperial Star Destroyer hovers. Birds fly in the distance. Golden hour, dusk. Beautiful sky at sunset. High quality digital art. Below is the result of running with 0.2. A high-quality illustration of a spaceship flying over the crossed city has been completed.

Regarding the sentences given to Stable Diffusion, a technique that “adding the name of a real artist can obtain a high-quality image” is widespread, but Mr. Salerno said, “When searching for the artist name on the web, the search results It is not desirable to display AI-made images,” so the images are generated without using the artist name.

Copy the title and URL of this article

Tags: Explain procedure draw picture desired Stable Diffusion simple sketch GIGAZINE

-